Protecting Your Platform's Reputation

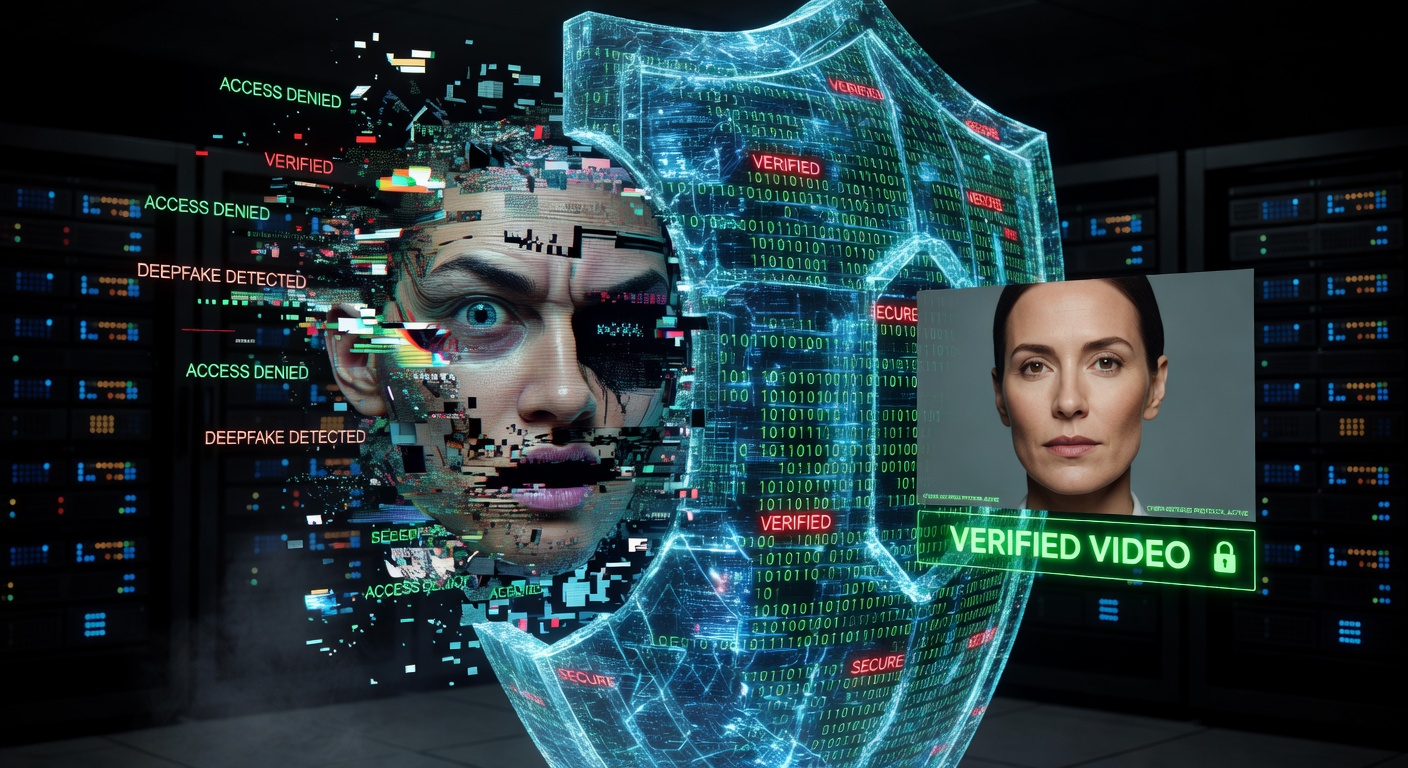

The biggest risk for an AI video platform isn't just regulation — it's liability. If someone uses your tool to generate a malicious deepfake, you need to be able to prove that you took "reasonable steps" to label it, trace it, and prevent misuse.Accountability Through Provenance

By embedding a unique, invisible forensic ID into every video generated on your platform, you create a permanent, cryptographically verifiable link between the content and the specific account and timestamp that created it. This is not just good ethics — it's a legal defense.What Does Accountability Look Like in Practice?

When law enforcement or a legal team subpoenas content from your platform, you can produce: 1. The C2PA manifest showing the exact generation parameters, timestamp, and platform account. 2. The forensic watermark embedded in the video pixels, surviving even re-uploads and re-edits. 3. An audit trail in your dashboard showing every video processed and its provenance record.This three-layer evidence stack is what separates platforms that survive deepfake litigation from those that don't.

Legal Safe Harbors

Under the EU AI Act and emerging US legislation targeting synthetic media — such as the TAKE IT DOWN Act (signed into law 2025) and various state-level deepfake statutes — platforms that implement state-of-the-art transparency tools are in a much stronger legal position. A documented compliance stack demonstrates the "reasonable steps" standard that courts and regulators look for.The Cost of Not Acting

The EU AI Act's fines for non-compliant AI systems can reach €15 million or 3% of global turnover. Class-action deepfake lawsuits in the US are already being filed. The cost of LexPixel integration is orders of magnitude less than a single legal incident.Verdict

Forensic watermarking is your platform's defense layer. Don't launch AI video generation features without it.Frequently Asked Questions

Can these watermarks be removed?

While no security system is 100% unbreakable, LexPixel's neural watermarking is designed to withstand multiple rounds of re-encoding, cropping, and standard editing filters. Attempted removal itself creates forensic artifacts that can be detected.

What is the TAKE IT DOWN Act and how does it affect AI platforms?

The TAKE IT DOWN Act, signed into US law in 2025, criminalizes the non-consensual publication of intimate deepfake imagery and requires platforms to remove such content within 48 hours of notification. Platforms with forensic watermarking can trace and remove content far more efficiently, demonstrating good-faith compliance.

Does forensic watermarking make my platform legally immune?

No. But implementing C2PA provenance and forensic watermarking demonstrates 'reasonable steps' toward preventing harm — a key legal defense standard under both the EU AI Act and US tort law. It is a risk reduction tool, not an absolute shield.